Synthetic Identity Fraud in the Age of AI: How Fake People Are Beating Real Systems

AI has radically reduced the friction in this process. Generative models can now create realistic profile photos that pass facial analysis checks, generate identity documents that mimic government formats, and fabricate supporting artifacts like utility bills or bank statements with consistent metadata. This allows synthetic identities to pass Know Your Customer checks that were never designed to detect AI-generated inputs.

Once established, these identities behave strategically. They open low-risk accounts, make small purchases, pay balances on time, and slowly build trust with lenders and platforms. This grooming phase can last months or even years. When the identity reaches sufficient credibility, it is used to extract value through loans, credit lines, buy-now-pay-later programs, or coordinated bust-out fraud. By the time institutions recognize the pattern, the identity disappears, leaving no real person to pursue.

Artificial intelligence has also changed how these identities interact with humans. Voice cloning tools can replicate an individual’s speech patterns using seconds of publicly available audio. Deepfake video can now be used in live verification checks, defeating what many organizations believed was a high-confidence safeguard. This means fraudsters can escalate from automated onboarding to human review without breaking character.

The implications extend beyond banks and fintech platforms. Synthetic identities are increasingly used as launchpads for broader fraud ecosystems. Once an identity is accepted by one system, it can be leveraged across others. Accounts opened at streaming services, e-commerce platforms, or gig economy apps can provide additional behavioral data that strengthens the identity’s perceived legitimacy elsewhere. AI thrives on this feedback loop.

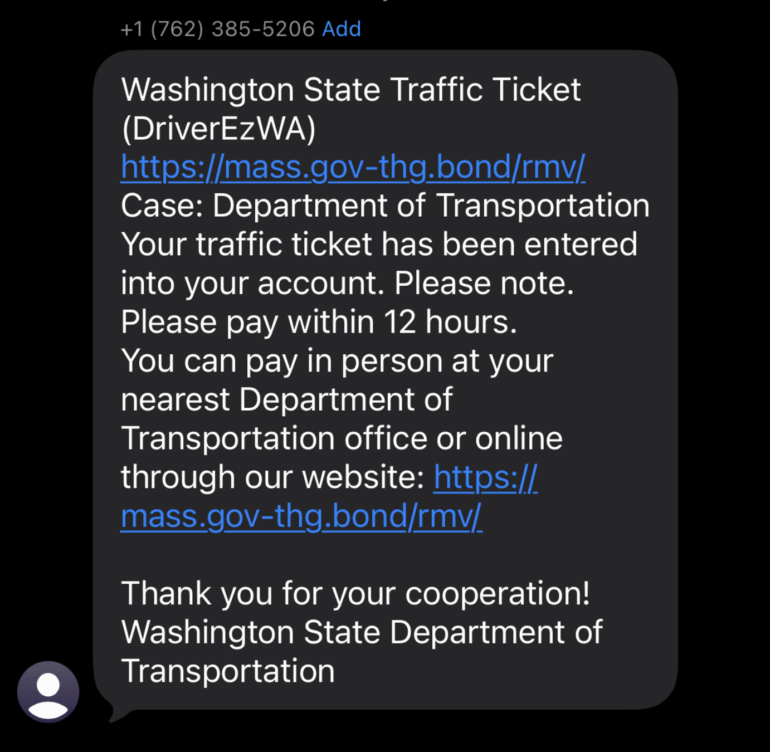

Deepfake-enabled impersonation is now being layered on top of synthetic identities for social engineering attacks. Victims receive calls that sound exactly like a bank representative or a trusted executive. Some scams now include video messages designed to reinforce urgency and credibility. Traditional red flags such as poor grammar, strange accents, or inconsistent stories no longer apply when AI handles presentation.

For consumers, this creates a delayed-impact risk that is easy to miss. Because synthetic identities often use real Social Security numbers, victims may not learn about the fraud until years later, when credit is denied or collection notices appear for accounts they never opened. Children are particularly vulnerable because their credit files may go unchecked for a decade or more.

For organizations, the failure point is often over reliance on static verification. Identity documents, selfies, and basic biometrics are increasingly easy to fabricate convincingly. AI systems are excellent at producing artifacts that look correct in isolation. Detection now requires behavioral analysis, cross-platform intelligence, and longitudinal monitoring rather than one-time checks.

There are warning signs people should take seriously. Unexpected verification calls asking for personal information. Requests to “confirm” identity through video or voice prompts initiated by the caller. Credit alerts tied to unfamiliar accounts. Messages that pressure immediate action to prevent account freezes or security breaches.

Protection remains unglamorous but effective. Never provide sensitive information in response to incoming calls, texts, or videos. Initiate contact using official channels you independently verify. Freeze or monitor credit reports. Use app-based multi-factor authentication rather than SMS. Reduce publicly available personal data, especially voice and video content that can be harvested for cloning.

The reality is simple. AI did not invent fraud, but it eliminated the constraints that once limited how far and how fast certain scams could scale. Synthetic identity fraud paired with deepfake impersonation is now an industrialized crime model. It defeats outdated controls, exploits trust in automation, and leaves little evidence behind. Awareness is no longer optional. It is the first and most necessary line of defense.